Symbolic AI vs Neural Networks: A Battle of Two Theories

From logic machines to trillion parameter language models, the seventy year battle between two fundamentally different theories of intelligence, and why the answer in the end might be both.

The history of artificial intelligence is, at its core, a debate about the nature of thought itself. Can intelligence be reduced to the manipulation of symbols according to formal rules like a mathematician working through a proof? Or does it emerge from the statistical patterns learned by billions of simple, interconnected units more like the way a child learns to recognise a face?

This question has shaped every major research programme, funding cycle, and AI winter since the field's founding in 1956. Understanding the history of the field is not only academic, it is essential for grasping why modern AI systems work the way they do, where they fail, and what history will teach us comes next.

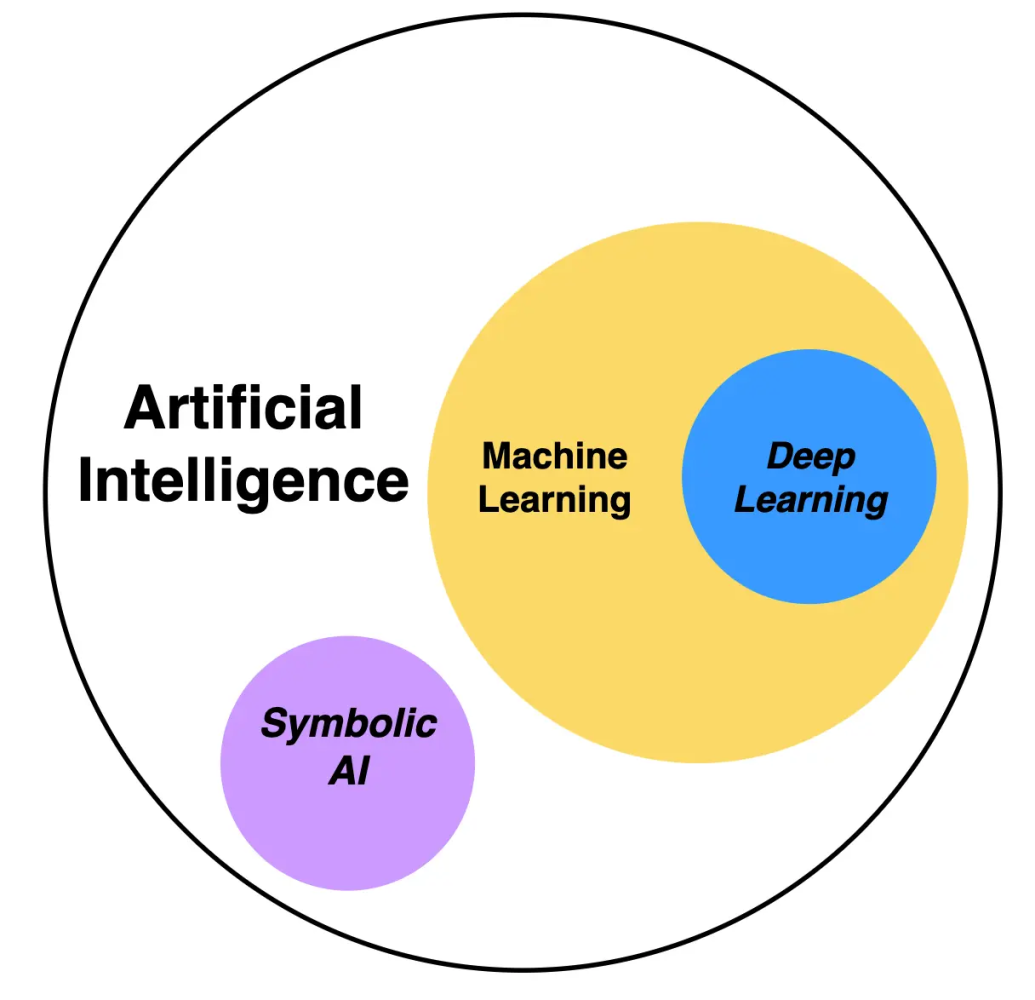

Two paradigms of intelligence

Before diving into the history, it helps to draw the boundary clearly.

Symbolic AI (also: Good Old Fashioned AI, GOFAI) holds that intelligence consists of the manipulation of symbols discrete, human readable tokens according to rules. Knowledge is represented explicitly: as logical propositions, ontologies, production rules, or semantic networks. Reasoning proceeds by search, inference, or theorem proving over these structures.

Core assumption: intelligence = formal symbol manipulation.

Connectionism / Neural Networks hold that intelligence emerges from the interaction of many simple processing units (neurons) connected by weighted edges. Knowledge is represented implicitly distributed across millions or billions of learned parameters. Reasoning is pattern matching: the network maps inputs to outputs through a cascade of nonlinear transformations.

Core assumption: intelligence = learned statistical patterns.

These two paradigms make fundamentally different bets about what matters: structure vs scale, interpretability vs performance, precision vs generality. The history of AI is the story of their alternating dominance.

The symbolic era (1956–1980s)

The field of AI was born symbolic. At the 1956 Dartmouth workshop organised by John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon the founding vision was that "every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it." The tools they reached for were logic and search.

In the years that followed, the symbolic programme produced a remarkable series of achievements:

- Logic Theorist (1956) Newell and Simon's programme proved 38 of the 52 theorems in Chapter 2 of Russell and Whitehead's Principia Mathematica. It was the first programme to automate deductive reasoning.

- General Problem Solver (1957) Newell and Simon's GPS used means ends analysis to solve puzzles like the Tower of Hanoi and missionaries and cannibals. It formalised the idea of problem solving as search through a state space.

- LISP (1958) McCarthy invented LISP, a programming language based on lambda calculus, designed specifically for symbolic AI. It remained the lingua franca of AI research for three decades.

- ELIZA (1966) Weizenbaum's chatbot used pattern matching and substitution rules to simulate a Rogerian therapist. It demonstrated how little intelligence was needed to produce convincing dialogue and how willing humans were to anthropomorphise machines.

- SHRDLU (1970) Winograd's natural language system could understand and execute commands in a microworld of coloured blocks. It was a triumph of symbolic NLP and a preview of the brittleness problem.

Logic programming & expert systems

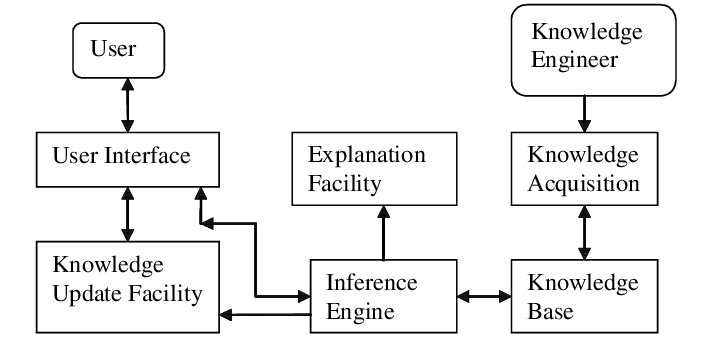

By the late 1970s, the most commercially successful branch of symbolic AI was the expert system. An expert system encodes domain knowledge as a set of IF-THEN production rules and uses an inference engine to chain through them.

The canonical architecture:

Knowledge Base (KB): IF patient has fever AND rash AND stiff_neck THEN suspect meningitis (confidence 0.85) Inference Engine: Forward chaining: given facts → derive conclusions Backward chaining: given goal → find supporting facts Working Memory: Current set of asserted facts about the case

Notable expert systems included MYCIN (1976, Stanford diagnosed bacterial infections with performance rivalling infectious disease specialists),DENDRAL (1969 identified molecular structures from mass spectrometry data), and R1/XCON (1980, DEC configured VAX computer orders, saving DEC an estimated $40M/year).

The Japanese Fifth Generation Computer Project (1982–1992) was the apotheosis of the symbolic vision: a $400M government initiative to build massively parallel inference machines running Prolog. It was an ambitious bet that raw logical inference power would crack general intelligence. It did not.

Frames, scripts & knowledge representation

A parallel stream of symbolic research focused on how to represent knowledge in structures richer than flat logical propositions.

- Frames (Minsky, 1974): Structured records with slots for properties and default values. A "restaurant" frame would have slots for cuisine, price range, seating with defaults that could be overridden by specific instances.

- Scripts (Schank & Abelson, 1977): Stereotyped sequences of events. The "restaurant script" encodes: enter → be seated → read menu → order → eat → pay → leave. Understanding a story about a restaurant means recognising which script is active and which slots are filled.

- Semantic networks: Graph structures where nodes are concepts and edges are relations (is a, has a, part of). Later formalised into description logics and ontologies like Cyc (Lenat, 1984 to present), which attempted to hand-code all of common-sense knowledge millions of assertions like "water is wet" and "dead people do not buy groceries."

The knowledge representation problem ultimately proved to be symbolic AI's Achilles' heel: the real world contains too much implicit knowledge to encode by hand, and the boundary conditions of every rule have boundary conditions of their own. This is the frame problem first articulated by McCarthy and Hayes (1969) and it remains unsolved in its general form.

The first AI winter

By the mid 1970s, the gap between AI's promises and its deliverables had become embarrassing. James Lighthill's 1973 report to the British Science Research Council delivered a devastating assessment: AI had failed to achieve the "grandiose objectives" set by its pioneers, and its methods did not generalise beyond toy domains.

Key criticisms:

- Combinatorial explosion: Search based methods hit exponential walls on any non trivial problem. The number of possible states in chess () or Go () made brute-force approaches hopeless.

- Brittleness: Symbolic systems failed catastrophically on inputs slightly outside their design specification. SHRDLU worked perfectly in its blocks world and not at all outside it.

- Knowledge bottleneck: Expert systems required months of painstaking "knowledge engineering" to encode rules that any human expert could apply effortlessly.

Funding dried up on both sides of the Atlantic. DARPA slashed AI budgets. The British government defunded most AI research following the Lighthill report. The first AI winter had begun and it would last roughly a decade.

Connectionism & neural networks

The roots of connectionism are as old as symbolic AI itself but for decades, neural networks lived in the shadow of the symbolic mainstream.

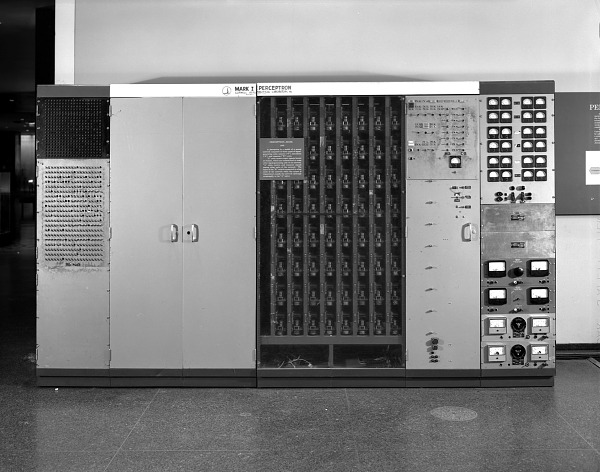

The perceptron & its limits

In 1958, Frank Rosenblatt at Cornell built the Mark I Perceptron a hardware implementation of a single layer neural network that could learn to classify visual patterns. The New York Times reported it as the "embryo of an electronic computer that [the Navy] expects will be able to walk, talk, see, write, reproduce itself and be conscious of its existence."

The perceptron computes a weighted sum of inputs and applies a threshold:

The perceptron convergence theorem guarantees that if the data is linearly separable, the learning rule will find a separating hyperplane in finite steps. This was a genuine theoretical achievement.

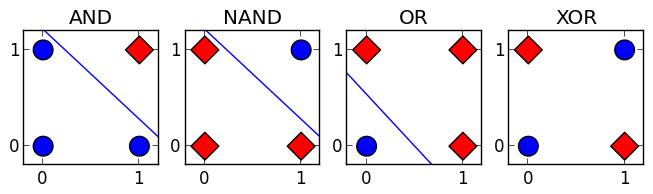

In 1969, Minsky and Papert published Perceptrons a rigorous mathematical analysis showing that single layer perceptrons cannot compute certain functions, most famously XOR:

The XOR function: . The four points with labels are not linearly separable no single hyperplane can separate the classes.

Multi layer networks can compute XOR, but Minsky and Papert argued (and were widely interpreted as proving) that there was no known learning algorithm for multi layer networks. This perception partly fair, partly exaggerated effectively killed neural network funding for over a decade.

Backpropagation & the revival

The key missing piece was an efficient algorithm for training multi layer networks. Backpropagation the application of the chain rule to compute gradients through a computational graph had been discovered multiple times (Werbos 1974, Parker 1985), but it was the landmark 1986 paper by Rumelhart, Hinton & Williams that demonstrated its practical power and ignited the connectionist revival.

For a network with layers , the forward pass computes:

where is a nonlinear activation function. Given a loss, backpropagation computes:

The gradient with respect to each layer's preactivations is computed recursively from the output layer backward hence "backpropagation." Stochastic gradient descent then updates weights: .

The 1986 paper showed that multi layer networks trained with backpropagation could learn internal representations discovering features that were never explicitly programmed. This was the connectionist dream realised: meaningful structure emerging from data alone.

"What the networks learn are not rules but regularities." David Rumelhart

The second AI winter

Despite the excitement of the connectionist revival, the late 1980s and early 1990s saw both paradigms hit walls simultaneously.

- Expert systems collapsed commercially. Maintaining enormous rule bases proved impossibly expensive. Lisp machine companies went bankrupt. The Japanese Fifth Generation Project ended in 1992 without achieving its goals.

- Neural networks couldn't scale. Without GPU hardware, training networks with more than a few hidden layers was computationally infeasible. The vanishing gradient problem gradients shrinking exponentially through deep layers made deep networks appear untrainable.

- SVMs and kernel methods (Vapnik, 1995) offered superior classification performance with rigorous theoretical guarantees, further marginalising neural networks in the 1990s machine learning community.

The second AI winter (roughly 1987–1993) was less about funding cuts and more about a crisis of confidence. The field fragmented: statistical machine learning (SVMs, random forests, Bayesian methods) came to dominate, while both pure symbolic and pure connectionist approaches fell out of fashion.

The deep learning revolution

Three converging forces ended the second winter and inaugurated the current era of neural network dominance:

- Data: The internet produced datasets of previously unimaginable scale ImageNet (14M labelled images), Common Crawl (petabytes of text), YouTube (500 hours of video uploaded per minute).

- Compute: GPUs, originally designed for graphics, turned out to be ideal for the matrix multiplications at the heart of neural network training. NVIDIA's CUDA (2007) made GPU programming accessible.

- Algorithms: Key innovations ReLU activations (which mitigate vanishing gradients), dropout regularisation, batch normalisation, residual connections made very deep networks trainable.

ImageNet & AlexNet

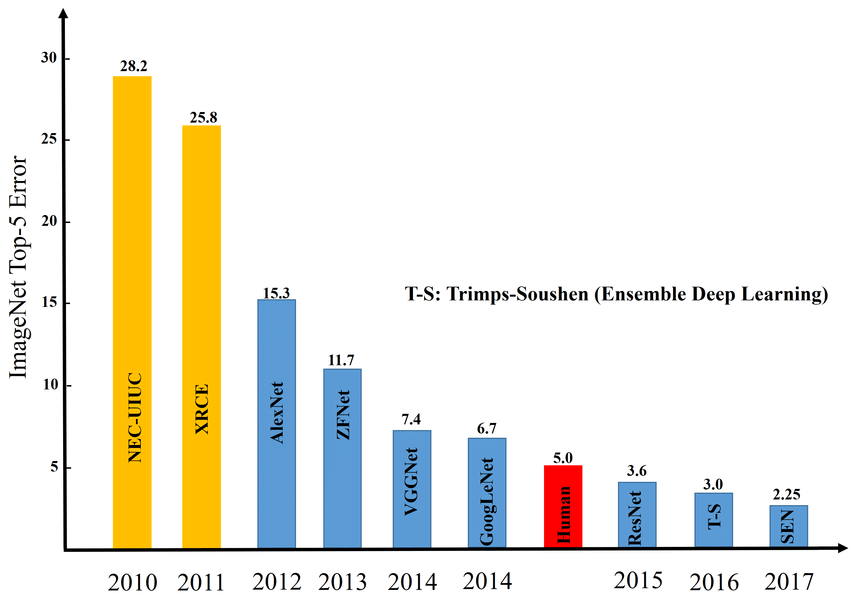

The watershed moment was the 2012 ImageNet Large Scale Visual Recognition Challenge. AlexNet (Krizhevsky, Sutskever & Hinton) a convolutional neural network with 60 million parameters trained on two GTX 580 GPUs achieved a top 5 error rate of 15.3%, obliterating the runner up at 26.2%. The margin was so large that it effectively ended the debate in computer vision: deep learning worked, and nothing else came close.

The error rate progression on ImageNet tells the story of the revolution:

- 2011: 25.8% (hand engineered features + SVM)

- 2012: 15.3% (AlexNet deep CNN)

- 2014: 6.7% (GoogLeNet / VGGNet)

- 2015: 3.6% (ResNet 152 surpassed human performance at ~5%)

Within three years of AlexNet, neural networks had gone from curiosity to state of the art (SOTA) across vision, speech, and NLP.

Attention & Transformers

The next revolution came from sequence modelling. Recurrent Neural Networks (RNNs) and their LSTM/GRU variants had dominated NLP, but they processed tokens sequentially limiting parallelism and struggling with long range dependencies.

In 2017, Vaswani et al. published "Attention Is All You Need", introducing the Transformer architecture. The core mechanism is scaled dot product attention:

Where (queries), (keys), and (values) are linear projections of the input. The scaling factor prevents the dot products from growing too large in high dimensions.

Multi head attention runs parallel attention operations with different learned projections:

The Transformer processes all positions simultaneously (not sequentially), enabling massive parallelism on GPUs. This architectural innovation is the foundation of GPT, BERT, PaLM, Claude, and virtually every frontier language model.

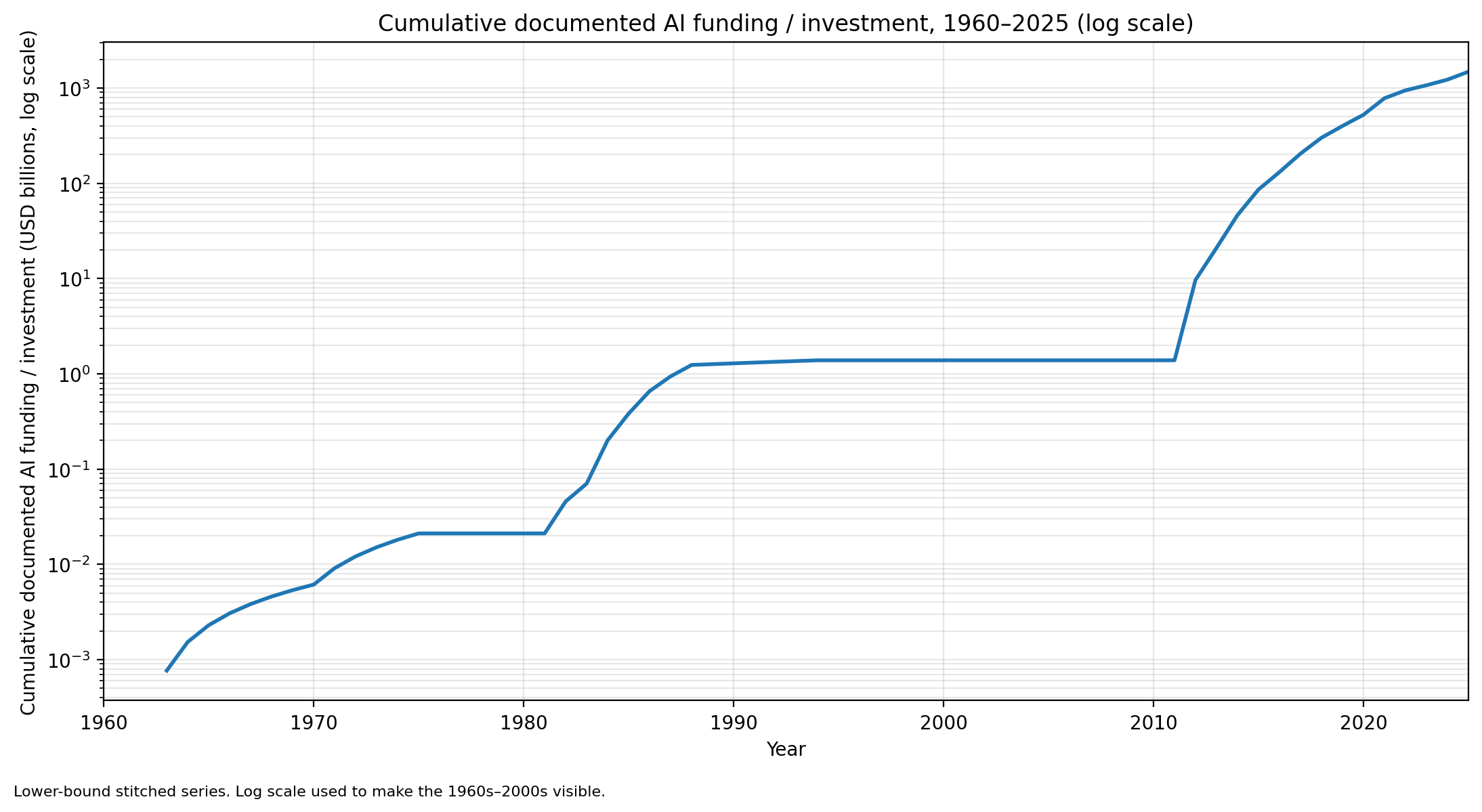

Scaling laws

Perhaps the most surprising empirical discovery of the deep learning era is that neural network performance follows remarkably predictable scaling laws. Kaplan et al. (2020) at OpenAI showed that language model loss decreases as a power law in three variables:

Where is the number of parameters, is the dataset size, is the compute budget, and ,. The loss decreases smoothly and predictably as any of these resources increases with no sign of plateauing.

This has driven the "scaling hypothesis": that simply making models larger, training them on more data, and using more compute will continue to yield capability improvements. The progression from GPT 2 (1.5B parameters) to GPT 3 (175B) to GPT 4 (rumoured ~1.7T) has largely validated this so far.

What symbolic AI does well

Despite the neural network revolution, symbolic methods retain decisive advantages in several domains:

- Formal verification & theorem proving: Systems like Lean, Coq, and Isabelle/HOL use symbolic methods to produce machine checked proofs. No neural network can guarantee that a mathematical proof is correct.

- Interpretability & auditability: A decision tree or a set of production rules can be read and understood by a human. A neural network's "reasoning" is distributed across billions of parameters fundamentally opaque without additional interpretability tools.

- Compositional generalisation: Symbolic systems generalise perfectly to novel combinations of known primitives. Given "John loves Mary" and the rule "if X loves Y, X will help Y," a symbolic system immediately infers "John will help Mary" regardless of whether that specific combination appeared in training. Neural networks notoriously struggle with this kind of systematic compositionality (Lake & Baroni, 2018).

- Causal reasoning: Pearl's do calculus and structural causal models provide a formal framework for distinguishing causation from correlation. Neural networks learn correlations; they cannot, in general, distinguish cause from effect without additional structure.

- Small data reasoning: An expert system can encode knowledge from a single textbook. A neural network typically needs thousands or millions of examples to learn equivalent competence.

What neural networks do well

- Perception: Vision, speech, audio, video any high dimensional sensory signal. Neural networks dominate every benchmark in object detection, speech recognition, medical imaging, and more.

- Natural language: Large language models generate fluent text, translate between languages, summarise documents, and answer questions with remarkable (though imperfect) competence.

- Learning from raw data: Neural networks require no feature engineering. Given enough data, they learn to extract relevant features automatically often discovering representations that humans never considered.

- Fuzzy pattern matching: The real world is noisy, ambiguous, and continuous. Neural networks handle this gracefully; symbolic systems require every edge case to be anticipated and encoded.

- Transfer learning: A model pretrained on a large corpus can be fine tuned for downstream tasks with very little additional data enabling practical AI deployment in datascarce domains.

- Scalability: Neural networks benefit predictably from more data, parameters, and compute. Symbolic systems hit diminishing returns as rule bases grow the knowledge acquisition bottleneck.

Neuro symbolic AI

The recognition that neither paradigm alone is sufficient has driven a growing research programme in neuro symbolic AI systems that combine neural perception and learning with symbolic reasoning and structure.

The argument is straightforward: neural networks excel at System 1 cognition (fast, intuitive, perceptual) while symbolic methods excel at System 2 cognition (slow, deliberate, logical). Human intelligence uses both. Why shouldn't AI?

Approaches & architectures

- Neural networks with symbolic outputs: A neural network perceives the world and produces symbolic representations that are then fed into a logic engine. Example: Neural Theorem Proving (Rocktäschel & Riedel, 2017) a neural network learns to select which inference rules to apply.

- Differentiable programming over symbolic structures: Neural Logic Machines (Dong et al., 2019) implement differentiable versions of logical operators, allowing end to end gradient based training on symbolic tasks. Similarly, ∂ILP (Evans & Grefenstette, 2018) makes inductive logic programming differentiable.

- Knowledge graph augmented neural networks: Systems like KGAT (Knowledge Graph Attention Networks) use graph neural networks to reason over structured knowledge bases, combining the relational structure of symbolic knowledge with neural attention mechanisms.

- LLMs as reasoning engines with tool use: Modern LLMs (GPT 4, Claude) can call external symbolic tools calculators, code interpreters, databases, theorem provers blending neural language understanding with symbolic computation. This is arguably the most commercially deployed form of neuro symbolic AI today.

- Program synthesis: DreamCoder (Ellis et al., 2021) learns to write programs in a domain specific language, building a reusable library of symbolic abstractions from neural search. It combines neural perception (to guide search) with symbolic structure (the programs themselves are interpretable and compositional).

Case studies

- AlphaGeometry (DeepMind, 2024): Solved International Mathematical Olympiad geometry problems at a silver medal level by combining a neural language model (to propose auxiliary constructions) with a symbolic deduction engine (to verify and chain proofs). Neither component alone could solve the problems.

- AlphaFold 2 (DeepMind, 2020): While primarily neural, AlphaFold incorporates physical constraints (bond angles, steric clashes) as structured inductive biases effectively embedding symbolic domain knowledge into the architecture.

- Wolfram|Alpha + ChatGPT: The integration of Wolfram's symbolic computation engine with OpenAI's LLM allows the system to understand natural language queries (neural) and compute exact answers (symbolic) a practical neuro symbolic product used by millions.

Philosophical underpinnings

The symbolic vs connectionist debate maps onto deep questions in philosophy of mind:

- Physical Symbol System Hypothesis (Newell & Simon, 1976): "A physical symbol system has the necessary and sufficient means for general intelligent action." This is the foundational claim of GOFAI intelligence is symbol manipulation, and nothing more is needed.

- Chinese Room Argument (Searle, 1980): A person in a room following Chinese symbol manipulation rules can produce correct Chinese responses without understanding Chinese. Searle argued this refutes strong AI: syntax is not sufficient for semantics. Connectionists note that the argument targets symbol manipulation specifically it's less clear how it applies to distributed neural representations.

- Embodied cognition: Intelligence may require a body sensorimotor interaction with a physical environment. This challenges both disembodied symbolic reasoning and text only neural networks. Roboticists like Rodney Brooks argued in the 1990s that intelligence emerges from situated behaviour, not internal representation of any kind.

- The binding problem: How does a neural network bind features into coherent objects? How does "the red square" remain distinct from "the blue circle"? Symbolic systems solve this trivially with variable binding; neural networks continue to struggle with systematic feature binding.

"The question is not whether machines can think, but whether what machines do can reasonably be called thinking." paraphrased from Dijkstra

Where we are heading

The current state of AI is dominated by neural networks specifically, large Transformer based language models. But the limitations are becoming increasingly clear:

- Hallucination: LLMs generate plausible but factually incorrect outputs. They have no grounded model of truth only statistical patterns over training text. Symbolic verification or retrieval augmented generation (RAG) is the standard mitigation.

- Reasoning failures: Despite impressive performance on benchmarks, LLMs still fail on multi step logical reasoning, especially when problems require systematic search or backtracking. Chain of thought prompting helps but doesn't solve the underlying issue.

- Lack of world models: LLMs do not build causal models of the world. They cannot reliably answer counterfactual questions ("what would happen if X had not occurred?") because they have no mechanism for interventional reasoning.

These are precisely the areas where symbolic methods excel. The frontier of AI research is increasingly neuro symbolic not because it is fashionable, but because neither paradigm alone can deliver the reliability, interpretability, and reasoning depth that real world applications demand.

The question that opened this post is intelligence symbol manipulation or pattern matching? may be a false dichotomy. The brain uses both: fast pattern recognition and slow deliberate reasoning, perception and logic, System 1 and System 2. The next generation of AI systems will likely do the same.

References

Newell, A. & Simon, H.A. (1976). "Computer Science as Empirical Inquiry: Symbols and Search." Communications of the ACM, 19(3), 113–126. doi:10.1145/360018.360022

Minsky, M. & Papert, S. (1969). Perceptrons: An Introduction to Computational Geometry. MIT Press.

Rumelhart, D.E., Hinton, G.E. & Williams, R.J. (1986). "Learning representations by back-propagating errors." Nature, 323, 533–536. doi:10.1038/323533a0

Krizhevsky, A., Sutskever, I. & Hinton, G.E. (2012). "ImageNet Classification with Deep Convolutional Neural Networks." NeurIPS.

Vaswani, A. et al. (2017). "Attention Is All You Need." NeurIPS. arXiv:1706.03762

Kaplan, J. et al. (2020). "Scaling Laws for Neural Language Models." arXiv preprint. arXiv:2001.08361

Lake, B.M. & Baroni, M. (2018). "Generalization without Systematicity: On the Compositional Skills of Sequence-to-Sequence Recurrent Networks." ICML. arXiv:1711.00350

Marcus, G. (2020). "The Next Decade in AI: Four Steps Towards Robust Artificial Intelligence." arXiv preprint. arXiv:2002.06177

Ellis, K. et al. (2021). "DreamCoder: Growing Generalizable, Interpretable Knowledge with Wake-Sleep Bayesian Program Learning." PLDI. doi:10.1145/3453483.3454080

Trinh, T.H. et al. (2024). "Solving Olympiad Geometry without Human Demonstrations." Nature, 625, 476–482. doi:10.1038/s41586-023-06747-5

Lighthill, J. (1973). "Artificial Intelligence: A General Survey." UK Science Research Council Report.

Searle, J. (1980). "Minds, Brains, and Programs." Behavioral and Brain Sciences, 3(3), 417–424. doi:10.1017/S0140525X00005756

McCarthy, J. & Hayes, P.J. (1969). "Some Philosophical Problems from the Standpoint of Artificial Intelligence." Machine Intelligence, 4, 463–502.